Cursor Composer 2 vs Claude Opus 4.6 vs Sonnet 4.6 in 2026: Which Model Should You Use for Agentic Coding?

📑 Table of Contents

🎯 Quick Verdict

Composer 2 vs Claude Opus 4.6 vs Sonnet 4.6 is the most important model comparison for Cursor users in March 2026. Composer 2 launched on March 19 claiming it beats Opus 4.6 on two specialized coding benchmarks at one-tenth the cost — a claim that is mostly true, with important caveats.

On March 19, 2026, Cursor dropped a model that immediately reshaped the conversation about what to run inside its IDE. Composer 2 — a fine-tuned variant of Chinese open-source model Kimi K2.5, with Cursor’s own continued pretraining and reinforcement learning — claims to beat Claude Opus 4.6 on Terminal-Bench 2.0 at $0.50 per million input tokens. That is one-tenth the price of Anthropic’s flagship model.

The question every Cursor user is now asking is the same: do I keep paying for Claude Opus 4.6 or Claude Sonnet 4.6 inside Cursor, or does Composer 2 change the calculus entirely? And for developers already running Claude Code, does this launch change anything at all? This comparison covers every benchmark, every pricing tier, and every workflow scenario — grounded entirely in published data as of March 24, 2026. For how these models fit into the broader Claude ecosystem, see our Claude plans comparison guide. For the full AI coding tool landscape, our AI coding assistants guide covers all major alternatives side by side.

⚡ Benchmark Comparison: Composer 2 vs Claude Opus 4.6 vs Sonnet 4.6

Overview: Why This Comparison Matters Now

Until March 19, 2026, choosing Cursor meant choosing which third-party model to run inside it — Claude Sonnet 4.6, Opus 4.6, GPT-5.4, or Gemini 3.1 Pro — with Anthropic and OpenAI as the ultimate performance ceiling. Composer 2 changes that equation by offering a Cursor-native model that claims to match or beat Opus 4.6 on two specialized coding benchmarks while costing a fraction of the price.

This is not just a model update. It is a strategic declaration by Cursor that it no longer wants to be a distribution channel for Anthropic and OpenAI — it wants to own the coding AI layer itself. For developers, it creates three distinct decisions: stay on Composer 2 inside Cursor and save significantly on credits, keep running Claude Sonnet 4.6 or Opus 4.6 inside Cursor for their reasoning depth, or migrate entirely to Claude Code where Opus 4.6 operates in its most powerful native environment. For context on the broader Cursor vs Claude Code decision, see our Cursor vs Windsurf vs Claude Code comparison.

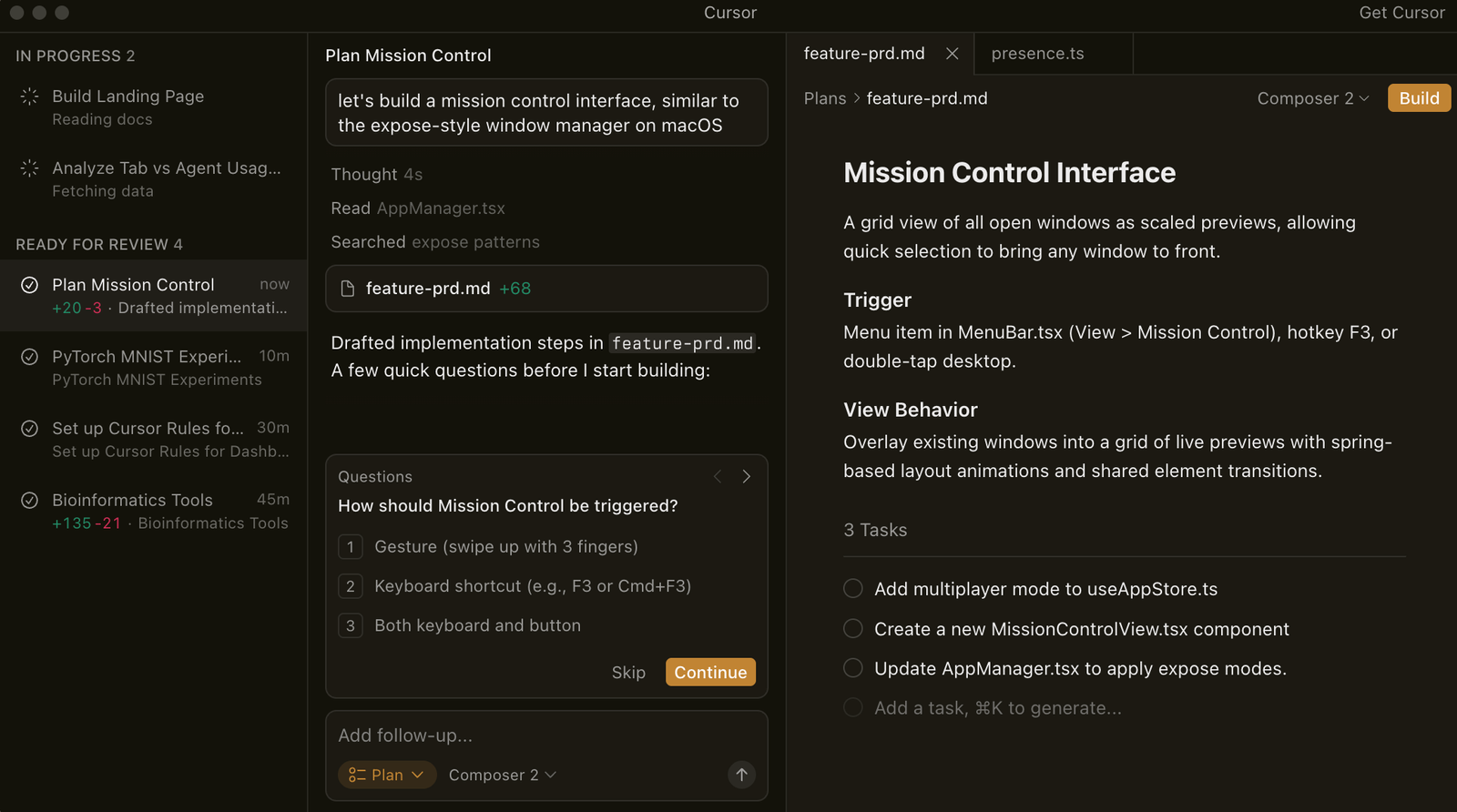

Cursor Composer 2: What It Is and What It Actually Does

Composer 2 is Cursor’s third-generation proprietary coding model, released March 19, 2026, and available exclusively inside the Cursor IDE. It supports prompts with up to 200,000 tokens, can generate code, fix bugs in existing software, and interact with a computer’s command line interface. Developers can optionally extend the model’s capabilities by providing it with access to a browser, an image generator, and other tools.

Composer 2 is a fine-tuned variant of Chinese open-source model Kimi K2.5, now available inside Cursor’s agentic AI coding environment, offering drastically improved benchmarks from its prior in-house model. Cursor is also launching Composer 2 Fast, a higher-priced but faster variant, as the default experience for users.

What makes Composer 2 technically different from its predecessors is its training approach. Prior Composer models were built by applying reinforcement learning directly on top of a frozen base model. Composer 2 flips this: Cursor first ran continued pretraining to update the foundational model weights using coding-specific data, then applied RL on top of that stronger base. Cursor’s approach, which they call compaction-in-the-loop reinforcement learning, builds context summarization directly into the training process — when a generation sequence hits a token-length threshold, the model compresses its own context to approximately 1,000 tokens from 5,000 or more, reducing compaction error by 50% compared to prior methods.

Cursor cofounder and research lead Aman Sanger says Composer 2 “won’t help you do your taxes” and “won’t be able to write poems” — Cursor is betting focus, not breadth, will help it compete more directly with larger rivals. This is a code-only model. It does not attempt to match Opus 4.6 on scientific reasoning, knowledge work, or general intelligence. It targets one thing: long-horizon agentic coding tasks inside Cursor.

Composer 2 Pricing

| Variant | Input Tokens | Output Tokens | Cache Read | Notes |

|---|---|---|---|---|

| Composer 2 Standard | $0.50/M | $2.50/M | $0.20/M | Lower cost, slightly slower |

| Composer 2 Fast | $1.50/M | $7.50/M | $0.35/M | Default in Cursor, same quality |

| Composer 1.5 (previous) | $3.50/M | $17.50/M | $0.35/M | Replaced — 86% more expensive |

Claude Opus 4.6: The Benchmark Holder for Deep Coding

Claude Opus 4.6 is Anthropic’s flagship reasoning model, launched February 5, 2026. It leads SWE-bench Verified at 80.8% — the most widely cited real-world coding benchmark — and offers a 128K maximum output token ceiling that is best-in-class for generating entire file diffs, comprehensive test suites, or multi-file refactors in a single response without truncation.

What Composer 2 cannot touch is Opus 4.6’s reasoning depth outside coding. Opus 4.6 scores 91.3% vs Sonnet’s 74.1% on GPQA Diamond — a 17.2-point difference that matters for expert-level science and research tasks. Agent Teams is one of Opus 4.6’s most compelling features, and it is not available on Sonnet. Agent Teams lets you spin up multiple Claude instances that work on different parts of a project simultaneously — one agent writes unit tests while another refactors the module under test, one agent migrates database schemas while another updates the ORM layer. Composer 2 has no equivalent to this.

For developers using Claude Code — Anthropic’s terminal-native agent — Opus 4.6 is the engine underneath. Claude Code with Opus 4.6 scores 80.9% on SWE-bench, marginally above the raw Opus score, because the Claude Code scaffold is optimised for the benchmark. Composer 2 is Cursor-only. Opus 4.6 works everywhere. For a full analysis of the Claude Code vs Cursor decision, see our Cursor vs Claude Code comparison.

Claude Opus 4.6 Pricing

| Context Level | Input Tokens | Output Tokens | Notes |

|---|---|---|---|

| Standard (≤200K context) | $5.00/M | $25.00/M | Standard API rate |

| Long Context (>200K) | $10.00/M | $37.50/M | Beta 1M token window |

| Claude Pro subscription | $20/month | Includes Opus 4.6 access | |

| Claude Max $100 | $100/month | 5x Pro usage, Agent Teams | |

| Claude Max $200 | $200/month | 20x Pro usage, full Opus 4.6 | |

Claude Sonnet 4.6: The Value Model Nobody Talks About Enough

Claude Sonnet 4.6 is consistently underrated in the Composer 2 vs Opus 4.6 conversation — and it should not be. The 1.2-point gap between Sonnet 4.6 and Opus 4.6 is the smallest in Claude’s history. Sonnet 4.6 now outperforms every Opus model released before 4.5. For practical coding work — fixing bugs, implementing features, writing tests — this gap is negligible.

Claude Sonnet 4.6 scores 79.6% on SWE-bench Verified at $3/$15 per million tokens — within 1.2 points of Opus 4.6. For teams that want Claude’s reasoning style without the Opus premium, Sonnet 4.6 handles 80%+ of coding tasks at comparable quality. Compared to Composer 2 Fast at $1.50/$7.50, Sonnet 4.6 is twice the price per token but sits 6 points higher on SWE-bench Verified — the more universally respected benchmark.

Sonnet 4.6 is also available everywhere Opus is — Claude Code, Cursor’s model selector, the API, and Claude.ai. It does not have Agent Teams, but for the majority of single-developer coding sessions, that limitation never surfaces. For teams comparing Sonnet 4.6 and Opus 4.6 specifically, our Claude plans guide breaks down which plan tier gives you access to each model.

Claude Sonnet 4.6 Pricing

| Access Method | Input Tokens | Output Tokens | Notes |

|---|---|---|---|

| API Standard | $3.00/M | $15.00/M | Same price as Sonnet 4.5 |

| Claude Pro subscription | $20/month | Primary model on Pro plan | |

| Claude Code (Pro) | $20/month | Sonnet 4.6 default on Pro tier | |

| Cursor model selector | Counts against Cursor credits | Available in all paid Cursor plans | |

Benchmark Comparison: The Full Picture

| Benchmark | Composer 2 | Claude Opus 4.6 | Claude Sonnet 4.6 | Winner | Source |

|---|---|---|---|---|---|

| SWE-bench Verified | ~76.8% (Kimi K2.5 base) | 80.8% ✅ | 79.6% | Opus 4.6 | Anthropic docs, morphllm.com |

| Terminal-Bench 2.0 | 61.7% ✅ | 58.0% | ~52.1% | Composer 2 | Cursor official, VentureBeat |

| CursorBench | 61.3% ✅ | N/A | N/A | Cursor-only benchmark | Cursor official |

| SWE-bench Multilingual | 73.7% ✅ | N/A published | N/A published | Inconclusive | Cursor official |

| GPQA Diamond | Not applicable | 91.3% ✅ | 74.1% | Opus 4.6 | NxCode, Anthropic |

| Context Window | 200K tokens | 1M tokens (beta) ✅ | 1M tokens (beta) ✅ | Claude models | Anthropic docs |

| Max Output Tokens | Not published | 128K ✅ | 64K | Opus 4.6 | Anthropic docs |

| Input Token Cost | $0.50/M ✅ | $5.00/M | $3.00/M | Composer 2 | Official pricing |

| Agent Teams | ❌ | ✅ | ❌ | Opus 4.6 | Anthropic docs |

| Works outside Cursor | ❌ | ✅ | ✅ | Claude models | Product docs |

The benchmark picture is genuinely split. On Terminal-Bench 2.0, which measures how well an AI agent performs tasks in command line terminal-style interfaces, GPT-5.4 still leads at 75.1, while Composer 2 scores 61.7, ahead of Opus 4.6 at 58.0, Opus 4.5 at 52.1, and Composer 1.5 at 47.9. That is a real and meaningful lead for Composer 2 on terminal task performance inside Cursor. But on SWE-bench Verified — the benchmark that most directly predicts real-world GitHub issue resolution — Opus 4.6 leads at 80.8% versus Kimi K2.5’s base of 76.8%, with Composer 2’s actual fine-tuned score unconfirmed by independent sources at time of publication.

Pricing Breakdown: The Numbers That Change Everything

| Model | Input ($/M) | Output ($/M) | vs Opus 4.6 | Subscription |

|---|---|---|---|---|

| Composer 2 Standard | $0.50 | $2.50 | 10x cheaper input ✅ | Cursor Pro $20/mo |

| Composer 2 Fast (default) | $1.50 | $7.50 | 3x cheaper input ✅ | Cursor Pro $20/mo |

| Claude Sonnet 4.6 | $3.00 | $15.00 | 1.7x cheaper input | Claude Pro $20/mo |

| Claude Opus 4.6 | $5.00 | $25.00 | Baseline | Claude Pro $20/mo |

| GPT-5.4 (reference) | $2.50 | $15.00 | 2x cheaper input | ChatGPT Plus $20/mo |

The pricing story for Composer 2 is compelling on paper. Composer 2 Standard at $0.50/$2.50 per million tokens is about 86% cheaper than Composer 1.5, which cost $3.50 per million input tokens and $17.50 per million output tokens. Against Opus 4.6 at $5.00/$25.00, Composer 2 Standard is a 10x improvement on input cost.

However, the pricing comparison is more nuanced in practice. For Cursor Pro subscribers paying $20/month, both Composer 2 and Claude Sonnet/Opus pull from the same credit pool. Cursor’s pricing for end users remains $20 per month for the Pro plan and $40 per user per month for the Teams plan, with a minimum monthly usage floor of $20 for individual plans. The per-token cost difference matters most for API users and teams on metered billing — for Pro subscribers with a fixed monthly credit budget, switching to Composer 2 effectively means your $20 credit pool stretches significantly further per request.

Best Use Cases

Use Case 1: Long-Running Multi-File Refactors Inside Cursor — Composer 2

Problem: A developer needs to refactor a large codebase inside Cursor — renaming APIs, updating dependencies, and ensuring consistency across dozens of interconnected files — without burning through their monthly credit allocation on Opus 4.6.

Solution: Composer 2 Fast as the primary model. Its 200K context window handles full project scope. Its compaction-in-the-loop training means it maintains goal coherence across hundreds of sequential actions without losing track of the original objective — the exact scenario where Composer 1.5 would previously hit context limits and start making errors.

Outcome: Composer 2 can solve problems requiring hundreds of actions, with self-summarization for long-running tasks. The model was trained on long-horizon coding tasks. For Cursor-native developers doing exactly this work, the credit efficiency gains are significant with no meaningful quality loss on standard engineering tasks.

Use Case 2: Complex Architectural Work and Deep Reasoning — Claude Opus 4.6

Problem: A senior engineer needs to reason through a complex architectural decision — evaluating trade-offs across distributed systems patterns, reviewing security implications, and producing documentation — before implementing the solution in code.

Solution: Claude Opus 4.6, either via Claude Code or Cursor’s model selector. Composer 2 is explicitly code-only — it will not help you think through architectural trade-offs, produce written analysis, or reason about business context. Opus 4.6’s 91.3% GPQA Diamond score — a 17.2-point lead over Sonnet — is the largest performance difference between the two models on any major benchmark and matters for expert-level reasoning tasks.

Outcome: For tasks that mix deep reasoning with coding — system design, security review, technical specification writing — Opus 4.6 is the only model in this comparison that genuinely handles the full scope. Composer 2 handles the implementation once the architecture is settled.

Use Case 3: Daily Coding Work on a Budget — Claude Sonnet 4.6

Problem: A developer does the majority of their coding inside Cursor or Claude Code and wants maximum quality per dollar — without paying Opus 4.6 prices for work that Sonnet handles equally well.

Solution: Claude Sonnet 4.6 at $3/$15 per million tokens. Claude Sonnet 4.6 scores 79.6% on SWE-bench Verified — within 1.2 points of Opus 4.6 — and handles 80%+ of coding tasks at comparable quality. For the vast majority of bug fixes, feature implementations, and code reviews, Sonnet 4.6 is indistinguishable from Opus 4.6 in output quality.

Outcome: Developers running Sonnet 4.6 in Cursor or Claude Code achieve near-Opus coding quality at 60% lower cost. The 1.2-point SWE-bench gap is too small to be the deciding factor in most real-world scenarios. For teams doing high-volume coding work, Sonnet 4.6 is the most defensible default choice in March 2026.

Use Case 4: Parallel Multi-Stream Engineering Projects — Claude Opus 4.6 Agent Teams

Problem: A team needs to ship a full-stack feature across frontend, backend, and database simultaneously — work that normally requires multiple developers coordinating over several days.

Solution: Claude Opus 4.6’s Agent Teams via Claude Code Max. Agent Teams lets you dispatch all tasks in parallel — one agent writes unit tests while another refactors the module under test, one agent migrates database schemas while another updates the ORM layer. Composer 2 has no equivalent. Sonnet 4.6 has no Agent Teams access. This feature is exclusively Opus 4.6.

Outcome: For teams with genuinely parallelisable engineering workloads, Agent Teams is the single feature that most changes production velocity — and it is unavailable in Composer 2 or Sonnet 4.6 regardless of IDE choice. If this workflow is your primary use case, Opus 4.6 on Claude Code Max is the only option in this comparison that delivers it.

Pros and Cons

✅ Pros

- Composer 2 — 86% Cheaper Than Its Own Predecessor: Composer 2 is about 86% cheaper than Composer 1.5 on both input and output tokens — the most dramatic within-product pricing improvement in AI coding tools in 2026. For Cursor Pro subscribers, this means significantly more coding work per dollar of monthly credit.

- Composer 2 — Beats Opus 4.6 on Terminal-Bench 2.0: Composer 2 scores 61.7 on Terminal-Bench 2.0, ahead of Opus 4.6 at 58.0 and Composer 1.5 at 47.9 — a genuine benchmark lead on terminal task performance that is relevant for developers doing CLI-heavy development inside Cursor.

- Composer 2 — Deep Cursor IDE Integration: Composer 2 can access Cursor’s agent tool stack, including semantic code search, file and folder search, file reads, file edits, shell commands, browser control, and web access. This native integration can be more valuable than raw model quality for completing real software tasks inside Cursor.

- Opus 4.6 — Best SWE-bench Verified Score in Class: Opus 4.6 leads at 80.8% SWE-bench Verified — the benchmark most directly connected to real-world GitHub issue resolution. Composer 2’s equivalent score on this benchmark is unconfirmed by independent sources as of March 24, 2026.

- Opus 4.6 — Agent Teams with No Equivalent: Parallel multi-agent orchestration via Agent Teams is exclusive to Opus 4.6 and unavailable in Composer 2, Sonnet 4.6, or any competing tool at any price. For teams with parallelisable workloads, there is simply no substitute.

- Opus 4.6 — 128K Maximum Output Tokens: The 128K output ceiling is best-in-class — generating entire file diffs, full test suites, or multi-file refactors in a single response without truncation. Composer 2’s maximum output is not publicly documented.

- Sonnet 4.6 — 98% of Opus Performance at One-Fifth the Cost: The 1.2-point gap between Sonnet 4.6 and Opus 4.6 is the smallest in Claude’s history — Sonnet 4.6 now outperforms every Opus model released before 4.5. For practical coding work, this gap is negligible and the cost saving is real.

- Sonnet 4.6 — Works Everywhere: Sonnet 4.6 is available in Claude Code, Cursor, the API, and Claude.ai. Unlike Composer 2 which is Cursor-exclusive, Sonnet 4.6 is portable across every development environment and does not lock you into a single IDE.

❌ Cons

- Composer 2 — Built on a Chinese Open-Source Model Without Initial Disclosure: A developer named Fynn discovered Composer 2’s true identity while debugging — the API response returned the model identifier kimi-k2p5-rl-0317-s515-fast rather than “Composer 2.” Cursor confirmed the Kimi K2.5 base the following day. The lack of upfront disclosure has raised legitimate questions about transparency and model provenance that some enterprise teams will find difficult to overlook.

- Composer 2 — Cursor-Exclusive, No External Access: Composer 2 is only available inside the Cursor IDE. Developers who use multiple tools, terminal-first workflows, or Claude Code cannot access it. Unlike Opus 4.6 or Sonnet 4.6, you cannot call Composer 2 via an API or use it in any other context.

- Composer 2 — No Agent Teams, No Deep Reasoning: Composer 2 explicitly does not handle non-coding tasks. It has no Agent Teams equivalent, no multi-agent orchestration, and no meaningful performance on scientific reasoning benchmarks like GPQA Diamond. For work that mixes coding with architectural thinking or research, it is the wrong tool.

- Composer 2 — SWE-bench Verified Score Unconfirmed: Cursor’s launch did not include an independently verified SWE-bench Verified score for Composer 2 against comparable model harnesses. The Kimi K2.5 base scored 76.8% — meaningful but trailing Opus 4.6’s 80.8% and Sonnet 4.6’s 79.6% by a margin that matters on complex real-world issues.

- Opus 4.6 — 10x More Expensive Than Composer 2 Standard: At $5.00/$25.00 per million tokens versus Composer 2 Standard’s $0.50/$2.50, Opus 4.6 carries a cost premium that is difficult to justify for standard coding tasks that Composer 2 or Sonnet 4.6 handle equally well. Above 200K context, Opus moves to $10.00/$37.50 per million tokens, making long-context workloads significantly more expensive.

- Sonnet 4.6 — No Agent Teams Access: Sonnet 4.6’s single most meaningful limitation versus Opus 4.6 is the absence of Agent Teams. For teams that have adopted parallel multi-agent engineering workflows, Sonnet 4.6 cannot replicate what Opus 4.6 delivers — regardless of how close the benchmark scores are.

Final Verdict

The Composer 2 vs Claude Opus 4.6 vs Sonnet 4.6 comparison in March 2026 has a clearer winner per workflow than most model comparisons — because each model is genuinely optimised for a different use case rather than all three competing on the same axis.

Choose Composer 2 if you are a Cursor Pro subscriber doing the majority of your coding inside the Cursor IDE and want to stretch your monthly credit budget significantly further without sacrificing agentic coding quality on standard engineering tasks. Composer 2 is a code-only model built for multi-file edits, refactoring, and long-running coding tasks inside its editor. It is not trying to be Opus 4.6. It is trying to be the best model for what Cursor developers actually do most — and on Terminal-Bench 2.0, it beats Opus 4.6 at one-tenth the token cost. The Kimi K2.5 disclosure controversy is a legitimate concern for enterprise teams, but for individual developers, the performance-to-price ratio is compelling.

Choose Claude Opus 4.6 if your work demands the deepest reasoning, Agent Teams parallel orchestration, the 128K output ceiling, or a model that operates beyond code into architectural thinking and knowledge work. Commenters on X have increasingly described moving from Cursor to Anthropic’s Claude Code, especially among power users drawn to terminal-first workflows, longer-running agent behaviour, and lower perceived overhead. If that describes you, Claude Code with Opus 4.6 is where the highest ceiling currently sits — and Composer 2 cannot replicate what Agent Teams delivers.

Choose Claude Sonnet 4.6 if you want the pragmatic middle ground — Claude’s reasoning quality and intent understanding, near-Opus coding performance at 79.6% SWE-bench, and the portability to use it across Cursor, Claude Code, and the API without being locked into any single tool. Sonnet 4.6 handles 80%+ of coding tasks at comparable quality to Opus at one-fifth the price. For the majority of professional developers, Sonnet 4.6 is the most defensible default in March 2026 — and it makes Composer 2’s cost advantage over Opus feel less significant when the real comparison is Composer 2 versus Sonnet, not Composer 2 versus Opus.

Ready to Try These Models?

Try Composer 2 in Cursor → Try Claude Opus 4.6 → Try Claude Sonnet 4.6 →Cursor offers a free Hobby plan — Claude Pro starts at $20/month for both Opus 4.6 and Sonnet 4.6

❓ Frequently Asked Questions

Does Composer 2 beat Claude Opus 4.6?

Partially. Composer 2 beats Opus 4.6 on Terminal-Bench 2.0 (61.7% vs 58.0%) and CursorBench, and does so at one-tenth the token cost. However, Opus 4.6 leads on SWE-bench Verified (80.8% vs Kimi K2.5’s 76.8% base), has a 128K output ceiling, Agent Teams, and works outside Cursor. The honest answer is that Composer 2 wins on terminal task performance and cost efficiency, while Opus 4.6 wins on coding quality depth and reasoning breadth.

Is Composer 2 better than Claude Sonnet 4.6?

On cost, yes — Composer 2 Fast at $1.50/M input is half the price of Sonnet 4.6 at $3.00/M. On coding quality, Sonnet 4.6 likely leads — its 79.6% SWE-bench Verified score is above Kimi K2.5’s 76.8% base, and Sonnet works across every development environment while Composer 2 is Cursor-exclusive. For Cursor-only developers, Composer 2 is compelling on cost. For developers who switch between tools, Sonnet 4.6 is the more versatile choice.

What is Composer 2 built on?

Composer 2 is a fine-tuned variant of Kimi K2.5, the open-source model from Chinese AI company Moonshot AI. Cursor confirmed the Kimi K2.5 base on March 20, 2026, after a developer discovered the model identifier in API response headers. Cursor applied continued pretraining on coding data and its own reinforcement learning on top of the Kimi K2.5 foundation. Approximately 25% of the model’s computational foundation derives from the original Kimi K2.5 architecture, according to Cursor’s VP of Developer Education.

How much does Composer 2 cost?

Composer 2 Standard costs $0.50 per million input tokens and $2.50 per million output tokens. Composer 2 Fast — the default inside Cursor — costs $1.50/$7.50 per million tokens. Both variants are 86% cheaper than the previous Composer 1.5 model. Cache read pricing is $0.20/M for Standard and $0.35/M for Fast.

Can I use Composer 2 outside of Cursor?

No. Composer 2 is exclusively available inside the Cursor IDE. It is not available via an external API, inside other IDEs, or as a standalone tool. If you want to use the underlying base model, Kimi K2.5 is available as an open-source model from Moonshot AI — but Cursor’s fine-tuned Composer 2 variant is Cursor-only.

Should I switch from Claude Opus 4.6 to Composer 2 in Cursor?

For most Cursor Pro users doing standard coding tasks — refactoring, bug fixing, feature implementation — Composer 2 Fast is worth trying as a default. It stretches your credit budget significantly further and beats Opus 4.6 on terminal task benchmarks. Keep Opus 4.6 available for the tasks where its reasoning depth and 128K output ceiling genuinely matter: complex architectural work, Agent Teams workflows, and anything that requires non-coding reasoning.

What is the context window for Composer 2 vs Claude Opus 4.6?

Composer 2 supports a 200,000-token context window. Claude Opus 4.6 supports up to 1 million tokens in beta — five times larger. For the majority of single-session coding tasks, 200K tokens is sufficient. For developers working with very large codebases, long-running sessions, or multi-file projects that require holding the full repository in context simultaneously, Opus 4.6’s 1M token window is a meaningful advantage.

Latest Articles

Browse our comprehensive AI tool reviews and productivity guides

Cursor Composer 2 vs Claude Opus 4.6 vs Sonnet 4.6 in 2026: Which Model Should You Use for Agentic Coding?

Cursor Composer 2 vs Claude Opus 4.6 vs Sonnet 4.6 — The smartest dev tools just leveled up. See which AI model actually codes, plans, and ships like a teammate—not just a chatbot.

Cursor vs Windsurf vs Claude Code in 2026: Which AI Coding Tool Should You Use?

Cursor vs Windsurf vs Claude Code is the defining AI coding tool comparison of 2026 — three tools built on fundamentally different philosophies, targeting overlapping developer audiences at nearly identical price points, but delivering very different day-to-day experiences

Claude Dispatch Review 2026: Anthropic’s Remote AI Agent — Setup, Use Cases, Limits & Is It Worth It?

Claude Dispatch launched March 17, 2026 — send tasks from your phone, your desktop executes them locally, you come back to finished work. Setup takes 2 minutes. Current reliability is ~50% on complex tasks. Here is everything you need to know before relying on it.

Xiaomi MiMo-V2-Pro vs GPT-5.4 in 2026: China’s Stealth Trillion-Parameter Model Takes on OpenAI’s Flagship

A Xiaomi AI model appeared anonymously on OpenRouter on March 11, topped the usage charts, processed 1 trillion tokens, and beat GPT-5.4 on Terminal-Bench 2.0 (86.7% vs 75.1%) — before anyone knew who built it. Full breakdown inside.

GPT-5.4 vs Claude Opus 4.6 in 2026: Benchmarks, Pricing & Which Model Wins for Developers

Two flagship models, one month apart, trading benchmark leads across nine evaluations. GPT-5.4 is cheaper and broader. Claude Opus 4.6 writes better code and ranks #1 with real users. Here is exactly which one you should be using.

Cursor Composer 2 vs Claude Opus 4.6 in 2026: Benchmarks, Pricing & Which Is Better for Developers

Cursor Composer 2 launched March 19, 2026 — beats Claude Opus 4.6 on Terminal-Bench 2.0 (61.7% vs 58.0%) at one-tenth the token cost. Here is what that actually means for your workflow.

Claude Code vs OpenCode in 2026: Is the Free Open-Source Alternative Worth It for Developers?

Claude Code holds a 57.5% SWE-bench score and 4% of all public GitHub commits. OpenCode is free, open-source, and supports 75+ AI models. Here is the full comparison for 2026.

Claude Free vs Pro vs Cowork vs Claude Code 2026: Which Plan Is Right for You?

Claude Free, Pro, Max, Cowork, and Claude Code compared side by side — pricing from $0 to $200/month, real usage limits, and which plan delivers the best value for professionals in 2026.

The 7 Best AI Chatbots for Small Business Owners 2026: Free + Paid Solutions

Discover the 7 best AI chatbots for small business owners in 2026, offering both free and paid solutions to enhance customer engagement and operational efficiency.

Claude 3 vs ChatGPT 2026: The Ultimate Comparison with Pricing & Features

Explore Claude 3 vs ChatGPT 2026 in this ultimate comparison of enterprise AI solutions, examining features, pricing, and performance for strategic business integration.

Top 5 AI Chatbots for Customer Service 2026: Boost Your Support with Smart Automation

Discover the top 5 AI chatbots for customer service in 2026, boosting support with smart automation, detailed features, and pricing.

The 6 Best Free AI Chatbots 2026: Powerful Tools Without the Price Tag

The world of free AI chatbots in 2026 is evolving faster than ever, giving individuals, startups, and enterprises access to powerful conversational AI without the cost barrier. From customer support automation to lead generation